Data analytics in the medical laboratory: Progress, gaps, and persistent barriers

This year’s MLO State of the Industry (SOI) Survey Article on Data Analytics explored how medical laboratories are navigating digital transformation amid mounting operational, financial, and workforce pressures.

A total of 127 medical laboratory professionals participated in the survey, with most working in hospital labs (62%) and holding lab manager, administrator, supervisor, or director roles (48%). Respondents represented a broad range of lab sizes, from small independent labs to large, multi-site organizations.

Alongside the subjective survey data, MLO presents expert insights from laboratory professionals and vendors in this space.

Five top survey findings:

- Reported LIS infrastructure shifts away from on-prem, with use of in-house lab information system (LIS) software/servers dropping to 25% in 2026, down from 52% in 2025, and 70% in 2024. Cloud-based LIS adoption increased modestly to 24%.

- Digital enablement is expanding beyond orders/results, with increased adoption of electronic QA/QC, scheduling, and inventory management functions.

- LIS/EHR interoperability and data integration remain persistent barriers, reported by more than half of respondents.

- Data quality and security challenges stem equally from system fragmentation and workforce readiness, including staff training and literacy gaps.

- Interest in AI is growing, but adoption remains limited, with only 7% reporting production use and most labs prioritizing foundational analytics investments.

Lab technology system infrastructure trends

Survey results point to incremental LIS infrastructure change rather than broad transformation. Most laboratories continue to rely on enterprise-wide electronic health record (EHR) embedded (53%) or stand-alone LIS platforms (32%), while use of on-premise servers continue to decline (25%, down from 52% in 2025, 70% in 2024). Cloud LIS adoption remains limited (24%), constrained by organizational policy, even as electronic workflows expand.

One medical lab professional commented that their lab had integrated with its hospital information system (HIS), software server systems, and cloud-based systems, noting how “no solution can seem to meet all our needs.”

More than three-quarters of respondents (76%) reported that cloud SaaS laboratory solutions are not currently permitted within their organizations, underscoring ongoing governance and security concerns.

Ed Price, director of Information Systems, Computer Service & Support, shared his thoughts on how vendor-lab partnerships are evolving around data governance, cloud adoption, and compliance: “Partnerships are getting more formal with shared responsibility models, standardized security attestations, tighter vendor due diligence, and clearer policies for access, retention, and auditability rather than informal handshake deals.

“Cloud adoption is moving forward with stronger controls like segmentation, least privilege access, comprehensive logging, and compliance alignment. The goal is for governance to be designed in from the start, not added later.”

Electronic workflows and integration

Electronic orders and results remain the most mature digital functions (89%), followed by analyzer integration (73%). Year-over-year growth was reported in electronic scheduling (39%, up from 25%), QA/QC (65%, up from 54%), and inventory management/supply chain management (31%, up from 23%), signaling broader operational digitization. However, there was a reported decline in adoption of point of care testing (POCT) management (37%, down from 41%) and customer service tools (17%, down from 20%).

Interoperability challenges continue to limit the impact of electronic workflows. More than half of respondents (54%) cited LIS and EHR integration as stumbling blocks to automation and analytics adoption.

Amanda J. Lewis MLS(ASCP)cm, quality assurance supervisor, Abilene Market Laboratories, Abilene, Texas, commented on how these issues impact her lab’s operations: “Interoperability gaps between the LIS, instruments, and EHR create daily challenges that hamper automation, innovation, and operational efficiency. Migrating to interoperable APIs like Epic is costly and labor-intensive.

“At the same time, legacy, vendor-specific systems and fragmented interfaces force staff to manually reconcile duplicate orders and rely on custom SQL and Excel workarounds to produce analytics. Limited functionality and outdated infrastructure further constrain what labs can afford and implement, ultimately slowing clinicians and lab staff and hindering process improvements.”

Cyndee Jones, MSHCA, CLS, senior director, Laboratory Services, Valley Health Laboratory Administration, Winchester, Va., spoke to interoperability constraints and its impact on data sharing between systems: “The technology/testing does not have an automated way of getting into the patient record. This can be from a lack of interface or driver for automated analyzers, a lack of mechanism to scan in test kit information, and poor structure/functionality within the EHR for resulting tests outside of traditional laboratory information systems (e.g., POCT, physician office labs).

“Often, without automated processes testing is documented on paper and scanned into the chart. This process makes results hard to find and unable to be integrated in the same way as discrete data points.”

Marci Dop, VP Enterprise Lab Operations, ELLKAY, offered a vendor’s perspective on system connectivity and data management: “Interoperability is still a challenge because many labs still use connections that simply move data from one system to another. Real progress depends on reliable patient identification across systems, standardized orders and results, and data that is consistent, trusted, and usable for reporting and clinical decisions.”

Lisa-Jean Clifford, President at Gestalt Diagnostics added, “One of the biggest issues is the transparency and terminology being used by various vendors and solution providers when using the word interoperability. There are varying degrees of integration, which of course carry varying degrees of value to the laboratory and workflows. A vendor who is using the word interoperable as interchangeable with interfacing is not doing a full, real-time, bi-directional data exchange of all discreet data. Interoperability means open architecture, flexible workflow support and real-time data exchange between multiple differing applications and data sets.”

She continued, “Another issue is that some vendors are not willing to openly exchange this data even if they are able to. There is a bit of a turf war when a vendor wants to maintain complete control over the customer vs. providing a fully functioning solution that is best for the customer or end user.”

Data quality and security trends

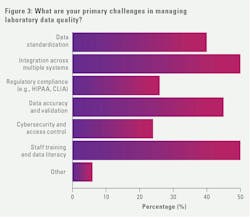

When asked to report on the primary challenges faced when managing laboratory data quality, integration across multiple systems (50%) and staff training and data literacy (50%) were ranked highest, followed by data accuracy/validation (45%) and data standardization (39%).

Vy Nguyen, informatics marketing manager, Clinical Diagnostics Group, Bio-Rad, commented on how vendors can help with their customers’ data challenges: “Vendors are helping labs improve trust in their data by building solutions that prioritize seamless integration with existing systems and embed validation checks at the point of entry, reducing manual rework.

“They’re also investing in intuitive user experiences and contextual guidance, so that staff at all levels—not just data specialists—can understand, interpret, and act on data confidently without added complexity.”

Lewis offered her thoughts on the challenges of staff education and training in this area: “Significant skill gaps persist due to minimal efforts to upskill workers. Manual data entry errors and limited data literacy undermine analytics and clinical outcomes. Consistent data practices, governance, automated validation, and targeted training are needed, yet investment and incentives remain scarce.”

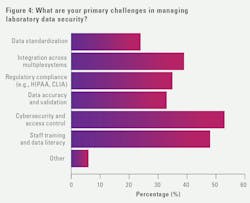

Turning to challenges with data security, cybersecurity/access control topped the list (53%), followed by staff training/data literacy (48%). Most laboratory professionals reported that their organizations have dedicated cybersecurity resources (65%) and vendor risk assessment requirements (72%).

Performance KPIs, analytics, and forecasting trends

Laboratories continue to prioritize operational key performance indicators (KPI), including turnaround time (87%), quality improvement initiatives (80%), cost per test (57%), and staff productivity goals (48%). Benchmarking practices vary widely, with roughly one-third (35%) comparing performance externally, a little over one quarter (27%) benchmarking internally across sites/departments, and the remainder not benchmarking at all.

Lee B. Springer, Ph.D., senior VP of Operations & Strategic Development LabSavvy, contrasted operational versus tactical analytics: “Operational analytics get you the ‘what’ and ‘why’ to ensure smooth operations but not the tactical ‘how’ which is more directly linked to your strategic analytics or the direction “where” the business is heading. Operational analytics will get you a TAT percentage, where tactical analytics will get you a TAT to ER admit ratio or TAT/productivity value.”

Regarding productivity, Jones commented on the criticality of this metric and the challenges of measuring it: “Staffing productivity is the performance indicator that is the most critical for us right now. The target is established by evaluating all similar sized laboratories and how many employees are used (e.g., # of employees per # of billable tests) then establishing what is considered the most ‘efficient’ for that compare group.

“This metric is apart from anything associated with quality, reliability, complexity of testing, or amount of test systems or methods used. The challenge is that the more understaffed all laboratories become, the more ‘efficient’ they appear on paper. This ends up moving the bar for all laboratories to a point that may impact employee satisfaction, retention, and quality of service.”

Turning to KPIs that help lab leaders understand their bottom line, Stephanie Denham, XiFin VP, RCM Systems and Analytics, commented: “Accurate cost-per-test tracking, paired with test level profitability analysis, enables labs to understand the true economics of their business. Labs need visibility across the entire lifecycle of an order - from order capture, through testing, to billing and reimbursement - to identify where costs are occurring, where inefficiencies exist, or where revenue is leaking.

“Ultimately, the labs that achieve stronger financial visibility are those that align data with process. When you can clearly see how costs map to every step of the revenue cycle, you can prioritize the right areas for optimization and automation. This ensures that efforts to reduce cost-to-collect deliver the highest possible return—without disrupting clinical operations.”

Analytics adoption increased modestly compared with last year, with more labs using data analytics across all aspects of lab management (20%, up from 12%). However, analytics tool maturity remains uneven, with many organizations relying on tools integrated with their LIS (36%). Fewer respondents reported using tools that are part of their LIS (27%, down from 32%), or a separate tools for data analytics (17%, down from 27%).

When asked about data visualization or dashboard tools used for reporting and decision-making, just over half of lab professionals (51%) reported using built-in LIS dashboards, followed by instrument-provided analytics (33%), custom-built internal dashboards (28%), and third-party business intelligence (BI) platforms (20%). Additionally, 19% reported no current use of data analytics tools.

Springer offered his interpretation of the trend toward LIS-native dashboards or instrument analytics over third-party BI adoption: “It signals that the laboratory is still looked at as an ancillary department vs. a business service line. Labs need to see themselves and push to be seen as a business service line that warrants systems with robust predictive analytics to help create business intelligence models that drive success. Looking outside of the lab industry for analytics solutions is also key to creating models that are adaptable to lab type, market and clients served.”

Price spoke to why labs tend to rely on built-in analytics tools, noting how AI can serve as a bridge to broader analytics capabilities: “Labs lean on LIS-native and instrument analytics because they’re turnkey, familiar, and often sufficient for day-to-day operational decisions without the added cost and complexity of a separate analytics platform. It signals many labs are still building readiness - standardizing definitions, improving data quality, and tightening governance - before expanding into broader, enterprise-wide analytics.

“AI can also help bridge the gap by surfacing insights and answering common questions directly from existing data while that foundation is being built.”

Looking at the data that feeds their lab analytics, more than one-third (33%) reported regularly integrating internal analytics with external data sources, while (39%) report no integration.

Nearly one-quarter (24%) reported their data source(s) is refreshed in real-time and the same percentage (24%) reported daily data refreshment.

Use of electronic management forecasting declined year-over-year across test utilization (48%, down from 68%), staffing levels (44%, down from 55%), and workloads (49%, down from 55%). More than three-quarters of lab professionals (76%) reported no electronic forecasting tools, indicating continued reliance on manual methods.

Test tracking trends

Reported tracking of infectious disease test volumes declined across multiple categories compared with last year, including influenza (50%, down from 67%), COVID-19 (57%, down from 73%), STI/HIV (30%, down from 45%), and strep (32%, down from 45%). New tracking categories introduced this year - HbA1c and Troponin – were reported by roughly one-third of respondents, suggesting evolving priorities tied to chronic disease management and cardiac care.

Jones spoke to how staffing shortages impact labs’ abilities to provide broad testing portfolios: “There are not enough people going into the laboratory career fields. There is also an extreme lack of college programs available to meet the industry needs, which further impacts the availability of people coming into this career field. [Because of this], many laboratories have sent out testing and operate with a limited test menu.

“This common business practice has decreased the number of students who can meet the full gambit of clinical training to qualify for their degree. This means a college cannot graduate enough students to make the program financially successful from their perspective. The resulting program closures further compound the workforce availability problem.”

When asked how better integration between diagnostic platforms and analytics help labs maintain visibility without increasing manual effort, George Wierschem, MBA, MT(ASCP), senior global product manager, Informatics, QuidelOrtho, answered: “When diagnostic platforms are fully integrated with centralized analytics tools, labs may gain real-time visibility into testing activity across supported instruments and sites without relying on manual effort.

“Automated dashboards and analytics can help consolidate results from multiple sources, enabling lab professionals to monitor operational trends, performance indicators and workflow changes, and drive meaningful patient care decisions, especially as operational complexity increases.”

Denham commented on the financial value of lab/provider data integration: “Labs need the ability to drill down to the ordering physician level. Understanding a provider’s test mix and payor mix is vital, especially when certain clients may predominantly send Medicaid or uninsured patients, negatively affecting profitability. These insights help labs segment their client base, refine service strategies, and make informed decisions about resource allocation.”

AI interest and usage trends

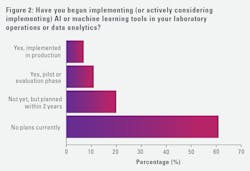

Interest in AI continues to grow, but adoption remains limited and uneven. Only a small percentage of laboratory professionals report active use of AI (7%) or AI pilot implementations (11%), while most (61%) have no near-term plans.

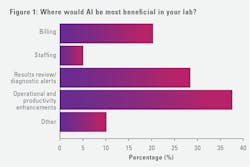

Perceived value of AI in laboratory operations centered on operational and productivity enhancements (37%), reviewing results/diagnostic alerts (28%), and billing-related use cases (20%). AI governance approached vary, with respondents describing system-led, lab-led, and collaborative models, as well as uncertainty regarding future ownership and oversight.

“AI in healthcare is still developing…outdated systems and fragmented data, make centralized, validated databases essential to its effectiveness,” said Lewis.” Starting with small-scale pilots, such as anomaly detection or maintenance prediction, may help build trust before expanding to critical clinical workflows.”

“The number one thing that must happen for increased adoption and use of AI in the clinical laboratory space is approval from the FDA,” said Jones. “Without their approval, the CLIA complexity increases and the task to maintain regulatory compliance and accreditation standards becomes a daunting task. Many laboratories do not have resources to support that.”

Nguyen pointed out how labs must prioritize foundational data and governance when considering AI tools: “Labs often overestimate AI’s readiness for fully autonomous interpretation or decision-making, not recognizing that these tools still require well-curated data and expert oversight to be both reliable and actionable.”

She offered up the following AI use cases where labs can find tangible value today: “Targeted applications like predictive maintenance for instruments, automated result flagging to support technologists, and workflow optimization that reduces turnaround times.”

According to Mike Hampton, chief commercial officer (COO), Sapio Sciences, “The labs making progress are embedding AI tools into workflows and applying platform-level intelligence, so context, governance, and decision-making remain connected. Disconnected tools can drive shadow AI and fragment workflows.”

He has seen AI deliver value to labs through use cases such as “Automating result interpretation and providing real-time assistance during routine lab processes.” He explained how “These gains are typically localized and task-specific, which is where many labs begin to overestimate readiness.”

Clifford reported observing several Gestalt customers utilize AI for primary diagnosis and teaching purposes. She continued, “However, I have heard lower than realized numbers in discussions with other vendors, including AI partners of ours. The benefits we hear from several of our customers align with patient safety, accuracy, support of the pathologist in workflow enhancement and confidence in reinforcing their diagnosis. The main purpose for adoption we have heard is: 1. Providing the best possible patient care; and 2. An aide for the pathologist.”

Looking ahead

Topping the list of current and short-term (next 2 years) challenges faced by lab professionals, are staffing (#1), followed by funding at (#2), and technology (#3). Strategic IT priorities are focused on LIS replacement (20%) and infrastructure and platform development (18%), followed by revenue cycle management optimization (13%) and data analytics optimization to support lab management (13%).

Advanced initiatives such as digital pathology implementation (6%), interconnectivity with reference and public health labs (4%), and adopting AI/ML tools (4%) ranked lower, suggesting that laboratories are focused on stabilizing core operations.

When asked what will distinguish labs that successfully mature their analytics capabilities over the next three years, Wierschem stated: “Based on my observation, labs that successfully advance their analytics capabilities tend to look beyond static reports toward cloud-based platforms that securely manage big data integrated from multiple sources and embed analytics directly into daily lab workflows, where permitted.

“Over time, these analytics capabilities may enable long-term data analysis to potentially detect and alert labs to shifts or trends, helping them to proactively address areas that could affect patient outcomes or their laboratory business.”

Moving forward, Hampton predicts labs that “treat analytics and AI as part of a unified, configurable lab platform rather than standalone reporting tools” will pull ahead of the curve. He added, “The differentiator will be applying AI across workflows with human oversight, surfacing trends and system-level issues early enough to change outcomes while maintaining governance and control.”

Dop believes labs that embed analytics directly into lab workflows, rather than producing more dashboards, will be best positioned to move insights into action. She noted how they will have the ability to use data “to proactively manage turnaround time, capacity, quality issues, and outreach performance.” She added, “They will also be better positioned to adopt predictive and AI-driven use cases because their data is trusted, timely, and usable.”

About the Author

Kara Nadeau

has 20+ years of experience as a healthcare/medical/technology writer, having served medical device and pharmaceutical manufacturers, healthcare facilities, software and service providers, non-profit organizations and industry associations.